Digital Marketing Case Study

Planful Sees Organic Search Improvement by Fixing Indexed Pages Blocked by Robots.txt

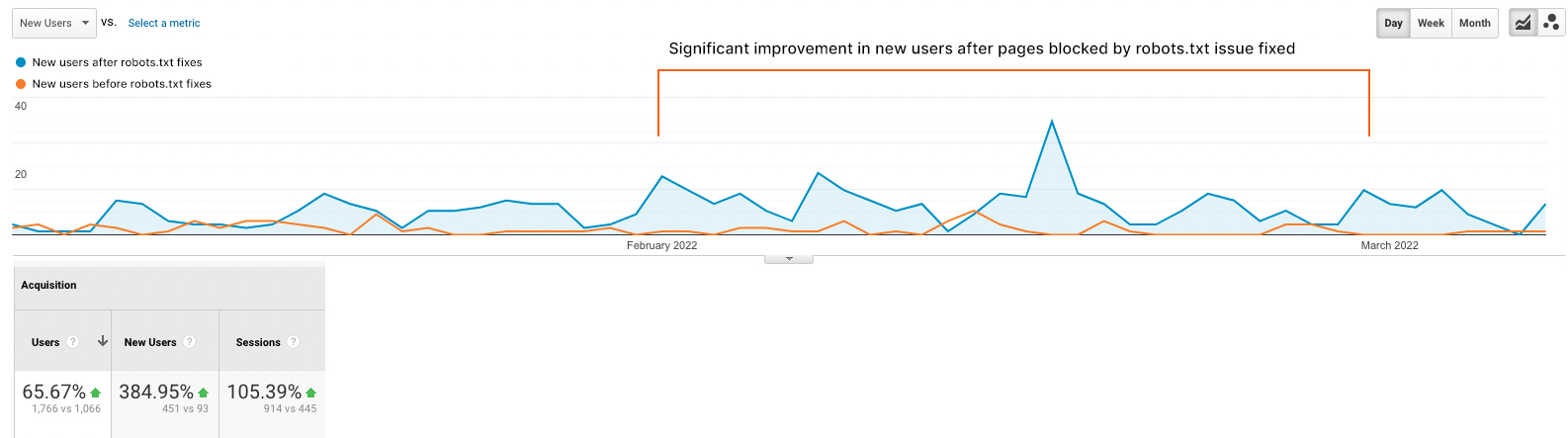

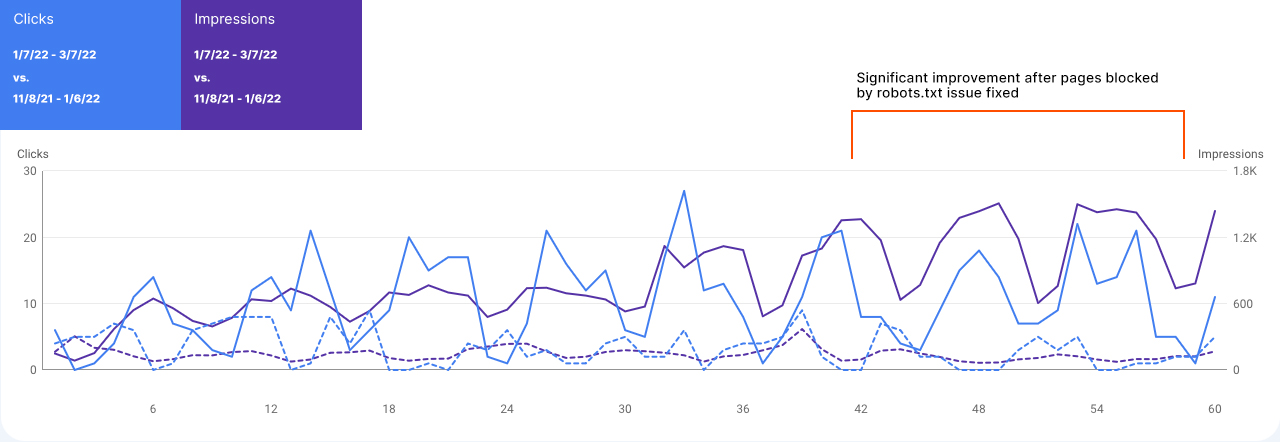

Monitoring, diagnosing and fixing issues with a site’s robots.txt file is a crucial component of technical SEO in any holistic white hat SEO strategy and provides an incremental boost to organic search performance – especially for larger domains with many URLs. An important page category of Planful’s domain was indexed though blocked by robots.txt and was thus providing fewer organic search impressions and clicks. As a result, a robots.txt SEO audit was needed to identify and fix the pages blocked by robots.txt allowing them to be shown in SERPs more often.

While Firebrand had been improving technical SEO for this domain for a while (core web vitals, status code audit, duplicate content) we conducted a deeper analysis of robots.txt best practices that led to the discovery of incorrect robots.txt disallow instructions that caused an entire set of pages to be indexed though blocked by robots.txt file. After rolling out the fix for this robots.txt disallow instruction – along with additional robots txt seo optimizations – Planful recovered valuable organic search impressions and clicks for this set of pages.